Last week, Facebook announced that it would be taking steps to prevent its users from being fooled into reading “fake news.”

In a post on Facebook, Mark Zuckerberg explained that the company is trying to “build a more informed community and fight misinformation.” Facebook’s plan is to implement tools that allow users to report stories shared on the platform they believe may be “hoaxes.”

From there, a group of fact-checkers—from groups like Snopes, ABC News, the Poynter Institute—will determine if the story is, in-fact, untrue. If they do, “you’ll see a flag on the story saying it has been disputed, and that story may be less likely to show up in News Feed,” though, users can still “read and share the story.” Also, disputed stories will not be allowed to be promoted through ads on the site.

The move comes as a response to criticism and concern that “fake news” (essentially, stories that were fabricated by shady websites to generate traffic and revenue) was so prevalent during the election, that many voters were confused as to what was true and what was just made up.

But, Zuckerberg’s announcement hasn’t been met with universal praise. Some critics have voiced concern that this could be used to censor the media. The fear among some is that it will be used to favor certain political persuasions, and silence voices that don’t fall in line with the site’s perceived political agenda.

So, are those fears valid?

A Fair Concern?

Concerns that Facebook could favor certain political ideologies aren’t totally unfounded. This spring, a former Facebook worker alleged that some “news curators”—who decided on what trending topics to display across the site—would routinely suppress news that favored conservative viewpoints. The revelations led Facebook to change its methodology behind displaying trending topics and for Zuckerberg to hold a personal meeting with a group of conservative political commentators.

However, Facebook maintained that the problem wasn’t an institutional issue, but a personnel one, acknowledging the possibility that biased curators essentially went rogue.

But, just because Facebook has dealt with bias in the past, doesn’t mean its solution to fighting the fake news problem is a bad thing.

#Lies vs. Bias

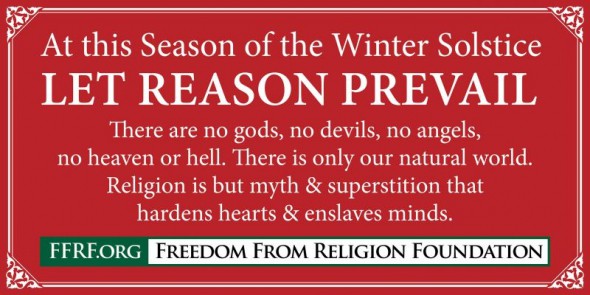

“Fake news” poses a unique problem for modern consumers of mass media. The “stories” that they present aren’t simply biased perspectives on the news—they are made up news.

Here are few actual headlines that went viral during the election season: “Pope Francis shocks world, endorses Donald Trump for president”; “FBI agent suspected in Hillary email leaks found dead in apartment in murder-suicide”; “Pence: Michelle Obama is the most vulgar First Lady we’ve ever had”. These stories—and many more like them that were read millions of times during the run-up to the election—weren’t biased. They were works of fiction.

The events “reported” never happened.

Concerns that labeling and burying fakes news would censor certain perspectives conflates two very different things: bias and lies.

Many modern media consumers constantly attempt to find hints of journalistic bias in the stories they see and read online. They’ve been informally trained to try to determine if stories are not simply attempting to tell them facts, but also to persuade them to think differently.

But, biased news stories aren’t the same things as stories that are entirely fake. A biased story may emphasize some key facts and omit others. It may contain opinion. It might intentionally use quotes that make its subject look really good or really bad. But, none of these things make it untrue. It may not contain the entire truth, but, technically, if it doesn’t contain falsehoods, the story still actually happened.

It’s now the responsibility of the reader to get information either from sources known for being unbiased, or to read the news from a variety of sources from across the political spectrum.

Fake news is different, though.

Sure, the headlines are likely written to appeal to readers of a certain political persuasion and are often obviously biased (appealing to what’s known as our own “confirmation bias” that favors things that validate our own opinions), but they are also lies. They aren’t simply omitting truth—they are presenting lies.

This is why they are so dangerous. And, this is why Facebook sees a responsibility to label them.

Fighting the Problem

Fears of censorship are rooted in a mistrust of big institutions that control what we see and hear in the media. This type of skepticism can be healthy. But, it can also backfire—because what Facebook is doing to fight fake news isn’t censoring opinions that it doesn’t like; it’s preventing lies from being presented as facts.

This isn’t an example of censorship; it’s an example of media ethics.

Should we be wary of censorship and the suppression of free speech? Absolutely. But, we should also be concerned with blatant lies overtly attempting to deceive media consumers.

The reason that Facebook is adopting steps to stop fake news is the same reason we shouldn’t be overly concerned with it permanently veering into censorship. Facebook’s decision is guided by the ultimate check-and-balance: The invisible hand of user satisfaction.

Its goal is to maintain a service that people like to use and trust enough to scroll through their feeds for hours every day. Violating that—by censoring ideas instead of weeding out hoaxes—jeopardizes their entire business model. It also gives us—the users—the power to hold them accountable.

If their tools do censor ideas instead of labeling obvious lies, it’s our job as users to call them on it. That sense of outrage—that a basic ethic is being violated—is the way these checks are coming into place.

There’s an idea that there is, in fact, a fourth branch of government that provides a final check and balance against power: the press. And, in order to be effective, the press doesn’t just have to be protected from censorship, but also violations of its most basic idea—to present facts.

Fake news threatens one of our most important tools in holding leaders and people in power accountable. Labeling it for what it is doesn’t represent an act of censorship.

It’s an act of integrity.